Create and Manage a Kubernetes Cluster from Scratch using AWS EC2 instances

Hey 👋, I'm Ayoub Essare, a DevOps and Cloud Enthusiast & Full-stack Engineer based in Montreal Canada.

Kubernetes has become the go-to solution for container orchestration, offering robust capabilities to automate the deployment, scaling, and management of containerized applications. In this blog post, we will walk through the process of creating a Kubernetes cluster that adheres to best practices from scratch using the kubeadm cluster bootstrapping utility. Even if you intend to use fully managed Kubernetes clusters, this tutorial provides you with a deeper understanding of Kubernetes clusters and helps you decide on the cluster configuration that is best for your requirements.

Blog post Objectives

Upon completion of this tutorial, you will be able to:

Install kubeadm and its dependencies

Initialize a control-plane node

Join a node to a cluster

Configure a network plugin

Prerequisites

You should be familiar with:

Working with Kubernetes to deploy applications

Working at the command line in Linux

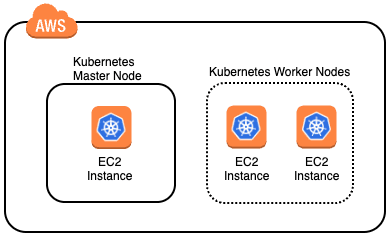

At the end of this blog post, you will be able to create a K8S Cluster with a Master Node and two Worker Nodes

This tutorial experience involves Amazon Web Services (AWS), and we'll use the AWS Management Console.

The AWS Management Console is a web control panel for managing all your AWS resources, from EC2 instances to SNS topics. The console enables cloud management for all aspects of the AWS account, including managing security credentials and even setting up new IAM Users.

Creating 3 AWS EC2 Instances

Start by creating 3 Ubuntu instances with the following names:

instance-a

instance-b

instance-c

Connecting to an EC2 Instance

We will connect to the three Amazon EC2 instances and configure them to be a Kubernetes cluster. In later steps, we'll connect to instances named instance-b and instance-c.

Now we have our 3 EC2 instances UP and running, let's connect to the instance named instance-a using the Amazon EC2 Instance Connect:

Right-click the instance named instance-a, and click Connect:

A browser-based shell will open in a new window and you will see a shell similar to:

Now that we are connected to our instance-a let's see what kubeadm is:

kubeadm is a tool that allows you to easily create Kubernetes clusters. It can also perform a variety of cluster lifecycle functions, such as upgrading and downgrading the version of Kubernetes on nodes in the cluster. We will use kubeadm to create a Kubernetes cluster from scratch. Creating clusters with kubeadm is the recommended way for learning Kubernetes, creating small clusters, and as a piece of more complex systems for more enterprise-ready clusters.

Installing Kubeadm and its Dependencies

Our three EC2 instances running the Ubuntu distribution of Linux. we will configure the instance "instance-a" as a Kubernetes control-plane and the other instances as worker nodes in the cluster. In this step, we will install kubeadm and its dependencies, including containerd.

- Enter the following command to update the system's apt package manager index and update packages required to install containerd:

# Update the package index

sudo apt-get update

# Update packages required for HTTPS package repository access

sudo apt-get install -y apt-transport-https ca-certificates curl software-properties-common gnupg lsb-release

- Allow forwarding IPv4 by loading the br_netfilter module with the following commands:

# Load br_netfilter module

sudo modprobe overlay

sudo modprobe br_netfilter

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

- Allow the Linux node's iptables to correctly view bridged traffic with the following commands:

# sysctl params required by setup, params persist across reboots

cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

# Apply sysctl params without reboot

sudo sysctl --system

- Install containerd using the DEB package distributed by Docker with the following commands:

# Add Docker’s official GPG key

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

# Set up the repository

echo \

"deb [arch=amd64 signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

# Install containerd

sudo apt-get update

sudo apt-get install -y containerd.io=1.6.18-1

Note: This is only one way of installing containerd. Please refer here for the other options.

- Configure the systemd cgroup driver with the following commands:

# Configure the systemd cgroup driver

sudo mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

sudo sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

sudo systemctl restart containerd

This is required to mitigate the instability of having two cgroup managers. Please refer here for further explanation.

- Install

kubeadm,kubectl, andkubeletfrom the official Kubernetes package repository:

# Add the Google Cloud packages GPG key

curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -

# Add the Kubernetes release repository

sudo add-apt-repository "deb http://apt.kubernetes.io/ kubernetes-xenial main"

# Update the package index to include the Kubernetes repository

sudo apt-get update

# Install the packages

sudo apt-get install -y kubeadm=1.28.1-00 kubelet=1.28.1-00 kubectl=1.28.1-00

- Prevent automatic updates to the installed packages with the following command:

sudo apt-mark hold kubelet kubeadm kubectl

The version of all the packages is set to 1.28.1 for consistency in this tutorial experience, and so that we can perform a cluster upgrade in a later blog post.

- Display the help page for kubeadm:

kubeadm

Read through the output to get a high-level overview of how a cluster is created and the commands that are available in kubeadm.

After setting the first instance-a up we must do the same thing for instance-b and instance-c: Right-click the instance named instance-b, and click Connect:

After connecting the instance-b, enter the same commands we have executed before for the instance-b, The same steps should be done for instance-c

Now that kubeadm is configured on the 3 EC2 instances, connect back the instance-a to configure it as the master node for our k8s cluster.

Initializing the Kubernetes Master Node

We will use kubeadm to initialize the control-plane node. The initialization process will create a certificate authority for secure cluster communication and authentication, and start all the node components (kubelet), control-plane components (API server, controller manager, scheduler, etcd), and common add-ons (kube-proxy, DNS). You will see how easy the initialization process is with kubeadm.

The initialization uses sensible default values that adhere to best practices. However, many command options are available to configure the process, including if you want to provide your own certificate authority or if you want to use an external etcd key-value store. One option that you will use is required by the pod network plugin that you will install after the control-plane is initialized. kubeadm does not install a default network plugin for you. You will use Calico as the pod network plugin. Calico supports Kubernetes network policies. For network policies to function properly, you must use the --pod-network-cidr option to specify a range of IP addresses for the pod network when initializing the control-plane node with kubeadm.

There are many network plugins besides Calico. Calico is used primarily because it is used in clusters in Kubernetes certification exams and it supports network policies. Calico is used internally by AWS, Azure, and GCP for their managed Kubernetes offerings, so you can be certain it is production-ready. However, if all of your environments live in AWS, you may consider the Amazon VPC network plugin.

- Initialize the control-plane node using the init command:

sudo kubeadm init --pod-network-cidr=192.168.0.0/16 --kubernetes-version=stable-1.28

The pod network CIDR block (192.168.0.0/16) is the default used by Calico. The CIDR does not overlap with the Amazon VPC network CIDR used in this tutorial. If it did, you would need to perform additional configuration of Calico later on to avoid the overlap. The output reports the steps that kubeadm takes to initialize the control-plane:

Read through the output to understand what is happening. At the end of the output, useful commands for configuring kubectl and joining worker nodes to the cluster are given:

- Copy the

kubeadm joincommand at the end of the output and store it somewhere you can access later.

It is simply convenient to reuse the given command, although you can regenerate it and create new tokens using the kubeadm token command. The join tokens expire after 24 hours by default.

- Initialize your user's default

kubectlconfiguration using the admin kubeconfig file generated bykubeadm:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

- Confirm you can use

kubectlto get the cluster component statuses:

kubectl get componentstatuses

The output confirms that the scheduler, controller-manager, and etcd are all Healthy. The Kubernetes API server is also operational, or kubectl would have returned an error attempting to connect to the API server. Enter kubeadm token --help if you would like to know more about kubeadm tokens.

Get the nodes in the cluster:

kubectl get nodes

The control-plane node is reporting a STATUS of NotReady. Notice

kubeadmgives the node a NAME based on its IP address. The--node-nameoption can be used to override the default behavior.Describe the node to probe deeper into its NotReady status:

kubectl describe nodes

In the Conditions section of the output, observe the Ready condition is False, and read the Message:

The kubelet is not ready because the network plugin is not ready. The cni config uninitialized refers to the container network interface (CNI) and is a related problem. Network plugins implement the CNI interface. You will resolve the issue by initializing the Calico network plugin.

- Enter the following commands to create the Calico network plugin for pod networking:

kubectl apply -f https://clouda-labs-assets.s3.us-west-2.amazonaws.com/k8s-common/1.28/scripts/calico.yaml

A variety of resources are created to support pod networking. A daemonset is used to run a Calico-node pod on each node in the cluster. The resources include several custom resources (customresourcedefinition) that extend the Kubernetes API, for example, to support network policies (networkpolicies.crd.projectcalico.org). Many network plugins have a similar installation procedure.

- Watch the status of the nodes in the cluster:

watch kubectl get nodes

With the network plugin initialized, the control-plane node is now Ready. Note: It may take a minute to reach the Ready state.

Press ctrl+c to stop watching the nodes.

Joining a Worker Node to the Kubernetes Cluster

The process of adding a worker node with kubeadm is even simpler than initializing a control-plane node. You will join a worker node to the cluster using the command that kubeadm init

- Open a second terminal connected to instance-b that is listed in the EC2 Console.

Refer back to the earlier steps on connecting to instance-a using EC2 Instance Connect, if required.

- Enter

sudofollowed by thekubeadm joincommand that you stored from the output ofkubeadm init. It resembles:

sudo kubeadm join 10.0.0.100:6443 --token ... --discovery-token-ca-cert-hash sha256:...

Read through the output to understand the operations kubeadm performed.

- In the control-plane node's SSH shell, confirm the worker node is part of the cluster:

kubectl get nodes

The worker node appears with a role of <none>.

Note: It may take a minute for the worker node to become Ready.

Confirm that all the pods in the cluster are running:

kubectl get pods --all-namespaces

All of the pods are Running, and the two-node cluster is operational. Notice that there are two Calico pods that support pod networking on each node.

Summary

In this blog post, we have transformed 3 EC2 simple instances into a Kubernetes Cluster having one Master Node and 2 Worker Nodes, we have also configured Calico as a pod network plugin.